•

30 min read

•

Dive into prompt injection, AI's top vulnerability. Learn how attackers manipulate LLMs to bypass safety, steal data, or perform unauthorized actions through clever, hidden commands.

Read More

Dive into prompt injection, AI's top vulnerability. Learn how attackers manipulate LLMs to bypass safety, steal data, or perform unauthorized actions through clever, hidden commands.

Read More

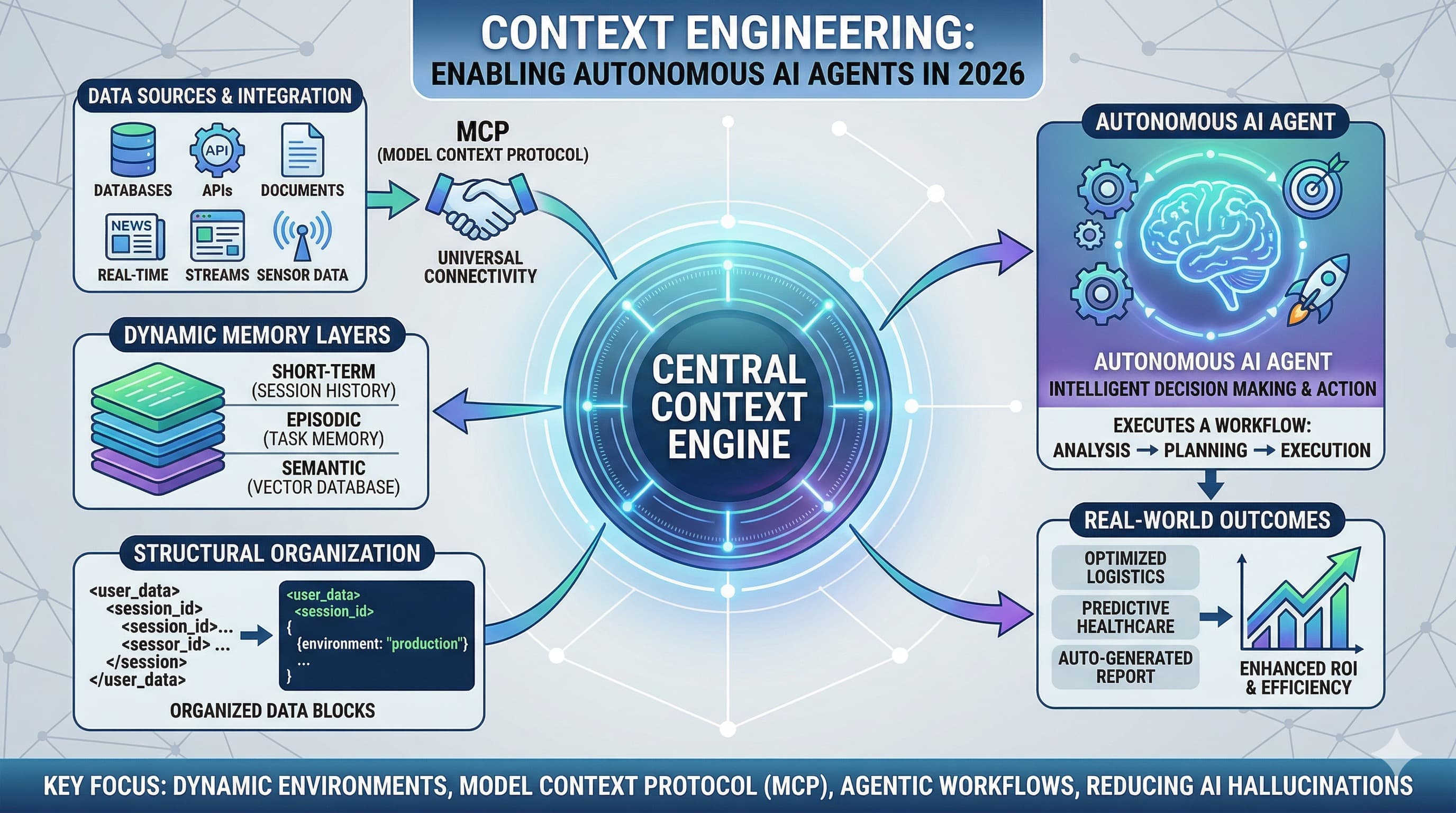

In 2026, the secret to reliable AI isn't just a better sentence; it’s Context Engineering. Learn how to architect dynamic environments for autonomous agents.

Read More

In this post, we are going to explore Markdown Prompting — a powerful way to get better answers from AI tools like ChatGPT, Gemini, and others.

Read More